On May 25, China’s most influential tech media 36kr has published an exclusive interview with the co- founder of DxChain Wei Wang, talking about the limitations in Blockchain’s development and the bottleneck of DxChain is committed to breaking through. Please refer down below for this interview.

Decentralized big data is somehow similar to Pandoran biosphere, a vast fictional neural network from James Cameron’s Avatar, where thoughts, memories, and consciousness (big data storage and computation) can be transferred between the Trees of Souls (nodes).

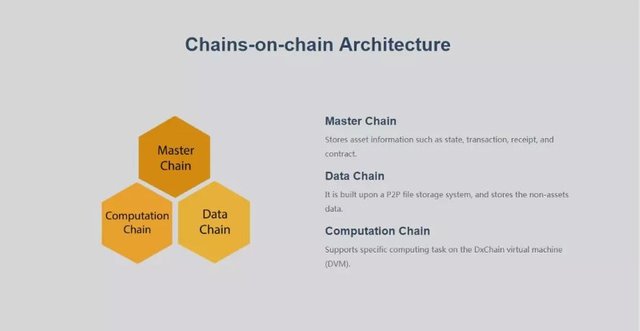

Although I have already gotten in touch with many compelling public-chain projects, consensus mechanisms, and network structures, for the first time, I heard of “Chains-on-chain” architecture, whose creator is a Silicon Valley-based blockchain startup, DxChain.

Wei Wang, CTO of DxChain, explained that since it is difficult to meet the requirements of data storage, computation, and privacy protection simultaneously with only one chain, they have added two chains — one for computation and the other for data storage — to complement the master chain. Wang said the idea comes from Lighting Network, a second layer payment protocol that operates on top of a blockchain.

The master chain is used to record events, such as transactions, to improve the overall network performance so that it can support storage and high-speed computation for big data.

Then, let’s take a look at the functionalities of another two side chains.

The data (storage) chain is responsible for storing metadata, which plays a similar role to an electronic directory. Metadata records the method of retrieving file fragments. With metadata, you can extract the files in an under-the-chain distributed file system through data relay.

The computation chain is responsible for recording matching processes of computation, such as which miner invokes what data, or whether the work can be completed or not ( an equivalent of metadata’s computational method). In this way, the whole network can verify computation results. Also, under the real condition, only supernodes are needed to make the verification.

The master chain uses Proof-of-Work (PoW) because it requires the highest level of security and stability. PoW has been tested for many years in the Bitcoin and Ethereum 1.0. Both side chains use DPoS to determine who takes the block out but adopt a different approach in determining who will verify the event.

The data (storage) chain uses the PoS+PDP (Proof Data Precision) to verify the process and prevent the following three attacks:

Sybil Attack — a malicious node creates a large number of pseudonymous identities, using them to gain a disproportionately large influence;

Outsourcing Attack — an attacker who receives a request from miners to verify whether to store data generates a certificate from other miners and pretends they have stored data;

Generation Attack — an attacker generates data (such as a compressed file) and regenerates data (decompresses the file) when it needs to be verified to prove that they have stored data.

The computation chain’s validation uses an original method of integrating Provable Data Computation with Verification Game. Generally, the way of verifying the authenticity of a result in a decentralized environment is repeating computation, which reduces the possibility of false information successfully disguised.

PDC is responsible for verifying computation, fining a correct answer with a small probability of being attacked from a group of untrusted nodes.

Take an example of an application scenario. A, a research institute wants to launch a fitness survey, seeking samples that meet the requirements of “American, male, under 35 years old, and employed”. This is a computational task.

The master chain transfers the computational task to the computation chain and retrieves data from the data (storage) chain. By enabling a cross-chain interaction, the two side chains will generate a new dataset — which is then stored in the data (storage) chain — and inform the master chain.

Miners who provide computational resources and storage will be rewarded.

DxChain adopts Relay to enable cross-chain interactions. The early BTC relay is regarded as a smart contract based on Ethereum, connecting Ethereum and Bitcoin in a decentralized way. Relay can play a similar role in DxChain, bridging the master chain, computation chain, and data (storage) chain.

While Hadoop is widely deployed by tech behemoths such as Oracle, IBM, Google, and Microsoft, it has not been used in a decentralized environment to solve cross-company & untrusted issues in big data storage and computation. That’s because small files in Hadoop can still occupy a block of 64m or 128m, which is neither economical nor efficient. Besides, Hadoop talents are scarce, particularly the core personnel of PMC technical Committee in Hadoop.

However, DxChain is packed by experienced researchers in Hadoop who are eager to build an infrastructure for decentralized big data storage and computation.

DxChain Founder Allan Zhang is a serial entrepreneur. He also founded Trustlook, a Silicon Valley-based security company. In Trustlook, Zhang discovered that the cost of packaging and acquiring data was too high.

Meanwhile, Wang believes that the blockchain technology, featuring decentralization, multi-nodes, and distributed storage, can not just reduce the costs of data retrieval, but also ensure that data will not be tampered or lost.

Wang suggested that DxChain will launch a test chain in three months, and release its official network early next year. Trustlook will be the first application in DxChain.

DxChain now has six engineers, two community operators, and two public relation officers. Some engineers are responsible for research and development of DAPP to better understand developers’ preference and needs.

DxChain has now completed cornerstone fundrasing and is processing institutional round.